Retinal-Resolution Varifocal VR

Lead Product Design Prototyper

Varifocal VR is a Time Machine project that lets us glimpse the future of immersive experiences with dynamic focus adjustment, aligning perfectly with our eyes’ natural vision.

Butterscotch Varifocal (BSV) is a state-of-the-art VR research prototype developed at Meta Reality Labs. By combining near-retinal resolution with dynamic varifocal optics, it solves the long-standing Vergence-Accommodation Conflict (VAC), allowing users to focus naturally on virtual objects as close as 20 centimeters from their eyes.

As a Product Design Prototyper on this “Time Machine” project, I led the software development of the prototype’s primary executive demonstration. Showcased at SIGGRAPH 2023—where it received recognition in the Digital Content Association of Japan’s Official Selection—the bespoke 5-minute VR experience was specifically engineered to highlight the unique benefits of varifocal technology through rapid iteration and close collaboration with research scientists and hardware engineers.

The Butterscotch Varifocal Prototype

The BSV prototype is a “Time Machine” research vehicle built to pass the Visual Turing Test. It combines two cutting-edge display technologies:

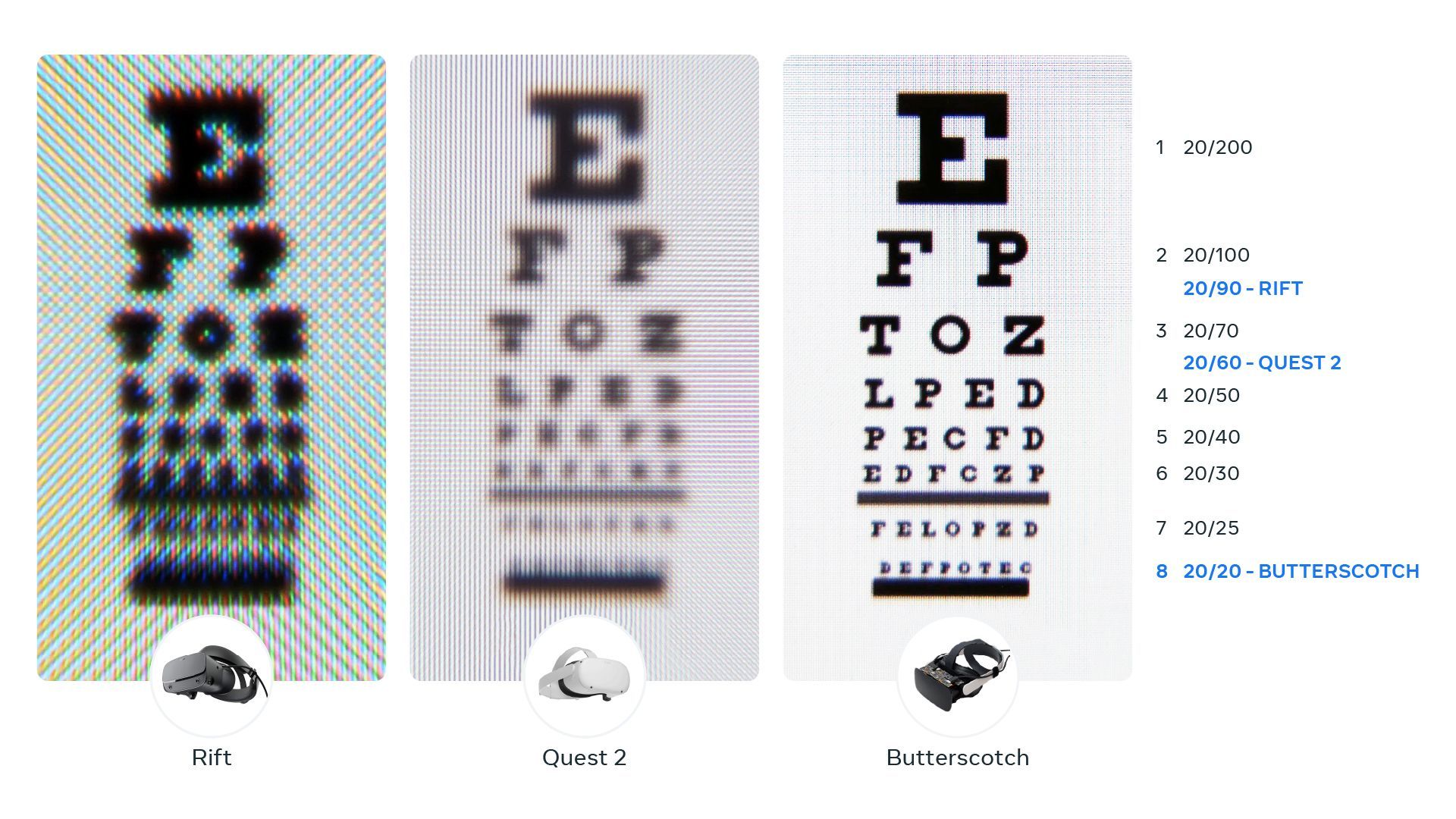

- Retinal Resolution: Utilizing 2880x2880 LCD panels and a condensed 50-degree field of view, the headset achieves 56 Pixels Per Degree (PPD). This extreme density eliminates visible pixelation and aliasing, rendering tiny text and intricate textures with lifelike clarity.

- Varifocal Optics: Using mechanical actuators and a highly precise eye-tracking system (four cameras total), the display panels physically move back and forth to adjust the focal distance on the fly.

This mechanical movement is coupled with Dynamic Distortion Correction to eliminate “pupil swim,” allowing the eyes to focus naturally on objects at any depth without geometric warping.

Designing the Executive Demo

When preparing to debut the BSV prototype to executives and at internal demos, the team initially considered reusing an older demo or a clinical user-study app. I advocated for a bespoke, 5-minute demonstration that explicitly highlighted the hardware’s unique capabilities without diluting the narrative.

Choosing the Right Format

My approach was shaped by a lesson learned during a school game jam, where a high-quality but long-form puzzle game lost to shorter, multiplayer experiences simply because fewer people had time to play it. For the BSV demo, the goal was maximum impact in minimum time (~5 minutes). I shifted the focus away from clinical study apps—which can feel tedious—toward a high-fidelity experience centered on high-resolution objects and close-distance interaction.

Breaking “Good” VR Design Practices

Current VR best practices often serve to mask hardware limitations—for instance, avoiding high spatial frequency details (like small text) to prevent aliasing, and keeping interactive objects 1.5 meters away to mitigate the Vergence-Accommodation Conflict.

To effectively demonstrate the value of the BSV prototype, I intentionally broke these rules.

The core of the demo featured high-detailed objects with meticulously tuned lighting and high-resolution textures that users were encouraged to bring as close as 20cm to their faces. By identifying what doesn’t work in current headsets and showcasing how the BSV prototype excels in those exact areas, the value of the technology became immediately apparent.

Rapid Iteration and Collaboration

Starting from a modified Interaction SDK sample scene, the experience was designed, tested, and finalized in just two weeks. This required intense, rapid iteration—sharing work-in-progress early to gather high-quality feedback.

Through continuous back-and-forth feedback with the engineering team, we meticulously curated the environment. We experimented with a movable eye-chart where the size was independent of distance (critical for measuring varifocal performance), but eventually decided to remove it as it proved too confusing for non-expert audiences. This high-speed, feedback-driven loop was crucial to delivering an intuitive and mind-blowing showcase of future VR technology.

Media Coverage

Hands-On: Meta's Retinal Resolution Varifocal Prototype

Meta's new Butterscotch Varifocal prototype combines retinal resolution with dynamic focal displays to eliminate the vergence-accommodation conflict.

Reality Labs Research Previews Future VR Display Systems at SIGGRAPH 2023

A deep dive into the Butterscotch Varifocal and Flamera prototypes that are driving the future of Meta's display technologies.