Boba 3: Ultra-Wide FOV VR

Lead Product Design Prototyper

Boba 3 is a research prototype that pushes the limits of immersion with a massive 180-degree field of view, covering 90% of human vision without compromising the headset's form factor.

Boba 3 is an experimental VR display system designed to achieve a massive, peripheral-filling field of view (FOV) while maintaining a wearable, balanced form factor. Unveiled at SIGGRAPH 2025 in the Emerging Technology session, it represents a significant leap from previous wide-FOV “hammerhead” prototypes by utilizing a novel optical architecture.

The Boba 3 Prototype

The human visual field spans nearly 200 degrees horizontally, yet most consumer VR headsets are limited to around 100 degrees. Boba 3 pushes this boundary to 180° horizontal by 120° vertical, covering approximately 90% of the human field of view.

Optical & Display Specifications

To achieve this width without making the headset prohibitively large or heavy, Boba 3 utilizes Dual-Element Pancake Lenses featuring a world-first high-curvature reflective polarizer layer. Usually, increasing FOV results in a significant drop in angular resolution; Boba 3 avoids this by using dual 4K LCD panels, achieving 30 PPD—a resolution slightly higher than the Meta Quest 3, but spread across nearly twice the area.

Designing the SIGGRAPH 2025 Experience

As the Lead Product Design Prototyper, I led the design direction for the primary demonstration experience shown at SIGGRAPH 2025. This role involved interfacing deeply with research scientists and the engineering team to ensure the final production met their rigorous hardware standards while presenting the technology’s benefits effectively to an audience.

Pre-Production & Planning

The Boba 3 demo was structured to showcase the benefits of an ultra-wide FOV in both Mixed Reality (MR) and Virtual Reality (VR) settings. Initially planned as a standard presentation, we reformed it into an immersive 3D experience with a stereoscopic presenter to better convey the benefits of passthrough and peripheral immersion.

- Scripting & Direction: We scripted the entire scenario, directing the presenter’s gestures toward virtual 2D panels and 3D exploded views of the headset components.

- Space Replication: We designed the experience within a digital replica of the SIGGRAPH booth space to ensure that distance, direction, and grounding were perfectly accurate to the real-world environment.

- FOV Toggling: A key feature of the demo allowed users to toggle the FOV between a standard Quest 3 view and the Boba 3 view, providing an immediate, “a-ha” moment for the technology’s value.

Technical Implementation

Implementing a high-fidelity demo for a non-standard research prototype presented several unique engineering challenges.

Stereoscopic Video & Grounding

To create a grounded, present-feeling digital person, we captured full-body stereoscopic video on a green screen. Achieving realism required correcting disparities between the passthrough camera, the virtual server, and the video’s eye content.

Any error in these parameters—scale, perspective, or tilt—would cause the actor to appear to “sink” into the floor or move with the user’s head. I spent significant time manually tuning these grounding parameters until the digital presenter felt indistinguishable from a physical person standing in the booth.

”First Encounters” Without Mesh Data

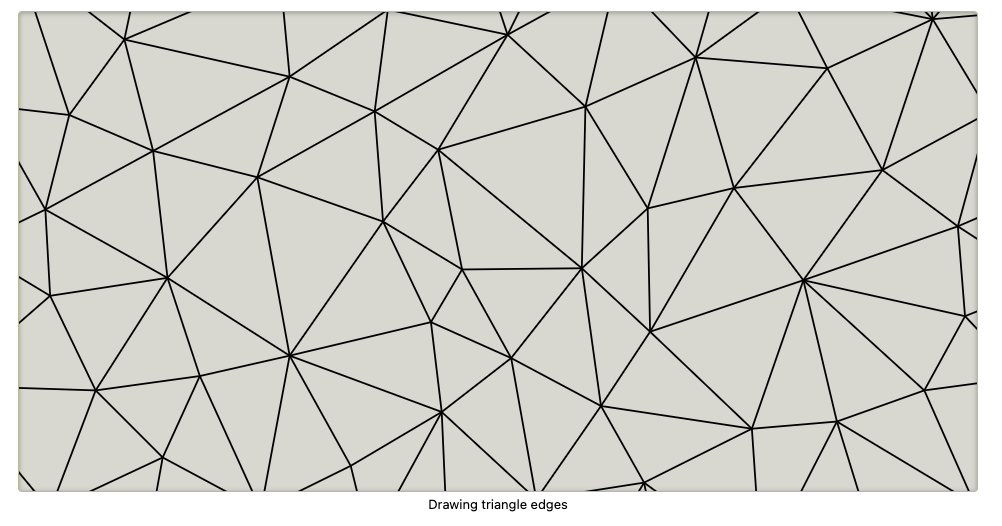

In the standard “First Encounters” MR game, walls break based on environmental mesh data. However, since raw mesh data was inaccessible on the Boba prototype, I utilized Delaunator to procedurally generate breakable segments from quad geometry. These triangles were randomly grouped to create a natural, “cracked” appearance while maintaining high performance.

Overcoming Engine Limitations

Developing for Boba 3 required navigating several Unity-specific hurdles:

- Headset Canting & Tilting: The prototype uses display canting and clocking to optimize rendering. I had to bridge the gap between the older Oculus plugin (which supported these axes but blocked passthrough) and the newer OpenXR plugin (which supported MR but lacked full rotation support).

- WFOV Frustum Culling: Wide FOV rendering often causes objects to “pop” at the peripherals due to incorrect frustum culling. I implemented a custom script to increase the culling matrix size, ensuring visual stability across the entire 180° field.

- Latency Equalization: A common challenge in wide-FOV MR is the tendency to treat the camera feed as “ground truth.” I had to equalize the latency between the virtual content and the passthrough feed to prevent virtual objects from “swimming” as the user moved their head.